First, the software stack for programming FPGAs has evolved, especially with the help of Altera, FPGAs have added support for the OpenCL development environment. But not everyone is a fan of OpenCL.

Nvidia first created its own CUDA parallel programming environment for its Tesla GPU accelerator. In addition, SRC Computer Co., Ltd. not only provided hybrid CPU-FPGA systems for the defense and intelligence industries as early as 2002, but also entered the commercial market in the middle of 2016, further developing its Carte programming environment. This programming environment enables C and Fortran to The program is automatically converted to the FPGA's Hardware Description Language (HDL).

Another factor driving the adoption of FPGAs is that it is difficult to continuously indent chip manufacturing technology, and the performance of multi-core CPUs has become increasingly difficult. Although the performance of the CPU has achieved large jumps, it is mainly used to extend the CPU's performance throughput rather than the individual performance of a single CPU core. (We know that architecture enhancement is difficult.) However, there is a convincing improvement in performance per watt for FPGAs and GPU accelerators.

According to Microsoft's running tests, the performance of CPU-FPGA and CPU-GPU hybrid computing is not inferior to the performance per watt when executing deep learning algorithms. GPUs are hotter and have similar performance per watt in operation, but at the same time they also bring more work.

Improves performance per watt. Analyze why the world's most powerful supercomputers moved to parallel clusters in the late 1990s and figured out why they are now turning to hybrid machines instead of Intel's next CPU-GPU hybrid. The main Xeon Phi processor "Knights Landing (KNL).

With the help of Altera FPGA coprocessors and Xeon Phi processor Knights Landing, Intel can not only maintain its high-end competitive advantage. And continue to lead the competition with the Open power alliance with Nvidia, IBM and Mellanox.

Intel firmly believes that ultra-large-scale computing, the workload of the cloud and HPC market will grow rapidly. In order to promote its computing business continues to thrive. This situation can only become the seller of FPGA, otherwise people will snatch the only way out.

But Intel does not tell you that. They said: "We don't think this is a defensive game or anything else," Intel CEO Brian Krzanich said at a news conference after Altera acquired the news.

“We think the Internet of Things and data centers are huge. These are the products that our customers want to build. 30% of our cloud workloads will be on these products, which is based on how we see trends and market development. prediction.

This is to demonstrate that these workloads can be transferred to silicon in one way or another. We believe that the best approach is to use a combination of Xeon processors and FPGAs with the best performance and cost advantages in the industry. This will bring better products and performance to the industrial field. In IoT, this will be extended to potential markets against ASICs and ASSPs; and in data centers, workloads will be transferred to silicon to promote the rapid growth of the cloud.

Krzanich explained: "You can think of FPGAs as a bunch of gates and can be programmed at any time. According to their ideas, their algorithms will become smarter over time and learning. FPGAs can be used as accelerators in many areas, Face search is performed while encrypting, and FPGAs can be reprogrammed in essentially microseconds. This is much less expensive and more flexible than large-scale, single-custom components."

The advantages of FPGA in AIThe popular AI (artificial intelligence) model is basically composed of artificial neural networks. These artificial neural networks all require huge calculations to run. The following figure is a simple artificial neural network structure that can be used to understand the amount of computation required by AI. This simple model has only 4 inputs and 3 outputs. Each circle represents a neural unit and is equivalent to a nerve cell. Each arrow represents at least one multiplication and addition. So this super-simple neural network requires more than 18 multiplications and additions each time it is run. The example presented in the previous article is much more complex and consists of 16 layers of neural networks. The first neural network requires approximately 90 million multiplications and additions, while the second neural network requires approximately 1.9 billion multiplications and additions.

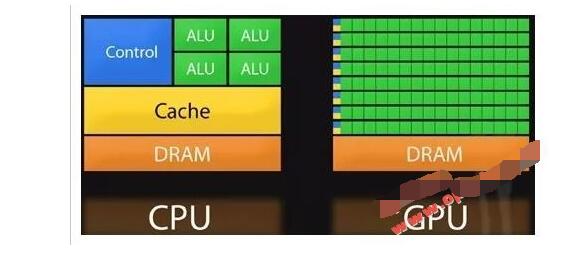

For these huge calculations, the traditional computer's CPU architecture has been difficult to meet the requirements, many AI calculations will use the GPU to accelerate. The original GPU was designed to speed up the processing of 3D images. Instead of having to execute complex control instructions like a CPU, most of the hardware resources can be used for calculations. Therefore, its computational power is much higher than that of a CPU with a similar level of integration. The green part of the ALU in the figure below is the CPU's calculation unit, while the same area GPU is almost full by the green calculation unit. It is also because of the huge difference in computing power. Bitcoin mining that was very hot some time ago used a GPU in the graphics card to calculate. Also due to the potential of AI applications coupled with the need for bitcoin mining, NVIDIA's stock price climbed steadily.

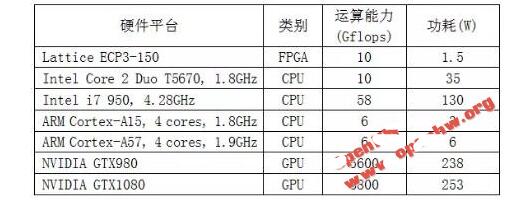

The new CPU architecture is not lagging behind, but has also strengthened its computing capabilities. For example, Intel's AVX extension instruction can simultaneously handle 512-bit computations, and hardware is equivalent to eight 32-bit computing units. ARM's VFPv3 is also focused on improving computing power. VFPv3 includes 32 64-bit computing units. The current CPU basically contains multiple cores, so the total number of computing units in a CPU is probably from tens to hundreds. Similarly, the FPGA also embeds these hardware computing resources, and the application time is much earlier. For example, the Lattice ECP3-150 was introduced 10 years ago (in 2007). It embeds 320 DSP hard cores, equivalent to 80 32-bit computing units. And Intel's AVX only appeared in 2008. Comparing the computing power, the FPGA and the new architecture have comparable CPU capabilities. Although the computing power is not as good as the GPU, if you consider the issue of power consumption, FPGAs can throw off the GPU several streets. Here are some common CPU, GPU performance and power consumption listed for reference. For ease of comparison, computing power is uniformly represented by G-flops, which is equivalent to 1 billion floating point operations per second. Why floating-point arithmetic? Because AI neural networks need to undergo many multiplications and additions from input to output, it is easy to generate large numbers. If an integer is used, 32bit has a maximum range of 10 to the 9th power. Overflow error will occur if this range is exceeded. Floating-point operations are represented by scientific notation. The highest range of 32-bit floating-point numbers can reach 10 to the 38th power, ensuring that most AI operations do not overflow.

From the above data it is not difficult to see that FPGA takes into account the advantages of high computing power and low power consumption. As for the GPU, although its computing power is the strongest, its power consumption is almost equivalent to half a rice cooker and it is more suitable for training AI in the laboratory. If you're on a mobile device, it's equivalent to carrying around half a rice cooker and running around. Once the AI ​​model is trained and needs to run on a mobile device, then FPGA is definitely a good choice.

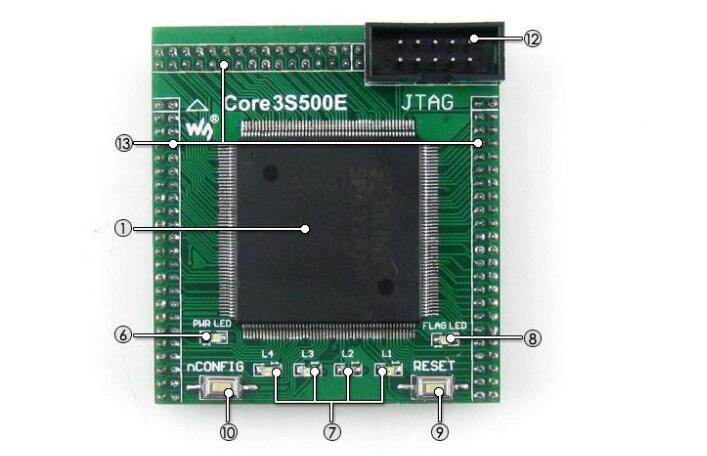

Only a handful of mobile phones equipped with chips too FPGAAccording to Forbes, Chipworks said that the FPGA chip is manufactured by Lattice Semiconductor of the United States and its model is “ICE5LP4Kâ€. It is mainly designed as a low-power device such as a mobile phone. . FPGA chips can be reconfigured after they have been manufactured and installed into a device.

It is worth noting that the introduction of such chips into the data center area is used to accelerate machine learning (ML) applications. In the field of smart phones, in addition to Apple’s first introduction of FPGA chips into the iPhone design, Samsung Electronics (Samsung Electronics) began using Lattice Semiconductor’s FPGA chips as early as 2014 when it launched the Galaxy S5 flagship. Description of the function of carrying this FPGA chip, but to the Galaxy S6 will no longer be used.

For the iPhone 7 equipped with an FPGA chip, Kevin Krewell, chief analyst at market research firm Tirias Research, said that Apple's move is an unusual move, and that the new built-in FPGA chip in smart phones will increase the extra production cost. Only a handful of mobile phones have been equipped with an FPGA chip.

Krewell believes that for the first time, Apple will be equipped with an FPGA chip on the iPhone, ready to run the machine learning algorithm on this chip, and it may be possible to introduce features related to advanced health detection in the future.

Other aspects may also be used as additional image processing required for unpublished virtual reality (VR) or augmented reality (AR) functions. However, it is also possible that the FPGA chip is only a temporary emergency solution, and that Apple may eventually adopt a dedicated chip in the future iPhone.

Since Apple did not disclose the function of the FPGA chip, it is still not clear what function the FPGA chip has been configured, or whether the iPhone 7 is already using the chip, but based on the nature of the FPGA chip can be re-programmed In the future, Apple may change the capabilities of this chip by upgrading the iPhone 7 firmware.

Apple added more AI elements to the iPhone 7 series. For example, the advanced camera lens technology of the iPhone 7 is derived from the computational vision algorithm of the new video signal processor. This is Apple's own internal design patent.

In the development of AI, Apple is taking a different route from Google, and paying more attention to the device itself rather than cloud computing. Apple's reason is that uploading data to the cloud is no more secure and privacy than computing directly on the device.

In addition to Lattice Semiconductor, two large FPGA chip makers, Intel’s Altera and Xilinx, also produce many FPGA chips that are suitable for use in data centers. Such data centers are the beginning of the popularity of FPGA chips. Applications, because FPGA chips can accelerate machine learning software in this area. Based on the potential of this chip, this is one of the main reasons why Intel will buy Altera for $16.7 billion in 2015.

Intel has long been eager to maintain the company's leading position in the global data center processor market, so it has begun to pair its server processor with Altera's FPGA chip; Microsoft (Microsoft) also invested heavily in the production of its own custom FPGA chip In order to strengthen the AI ​​computing capabilities of the company's data center.

Our Telecommunication Battery is easy to use and compatible with a wide range of devices. It uses a high-quality lithium-ion battery that has been tested to meet and exceed market standards. Our battery pack also features a user-friendly interface that allows you to monitor your battery level, discharge and recharge cycles and other vital information about your battery. In conclusion, our Telecommunication Battery is the ultimate solution for all your telecommunication needs. Our product is reliable, efficient, safe, and user-friendly. It's the perfect product for individuals on the go, frequent travelers, and digital enthusiasts. Get your Telecommunication Battery today and enjoy extended run-time for all your telecommunication devices from the comfort of your home, office, or anywhere else.

Telecom Batteries, Energy storage for telecom, Replacement Telecom Batteries, Telecom Lithium Batteries, Telecom Lithium Iron Battery

JIANGMEN RONDA LITHIUM BATTERY CO., LTD. , https://www.ronda-battery.com